Also, for jobs needing 60%-100% of the cpus (cores) on a node (but not more than are on one node), the job will likely will have a shorter wait time to start running if it is submitted to the snp partition instead of the general partition. Note: Because the default is one cpu per task, -n 12 can be thought of as requesting 12 cpus or cores. The above will submit the R job (mycode.R) to a single node (–N 1) on the general partition (–p general) with a twenty minute run time limit (–t 00:20:00), 5 GB memory limit (––mem=5g), and 12 tasks (–n 12).

Sbatch -p general -N 1 -mem=5g -n 1 -t 1-wrap="Rscript mycode.R" The equivalent command-line method: module add r Note: Because the default is one cpu per task, -n 1 can be thought of as requesting just one cpu.

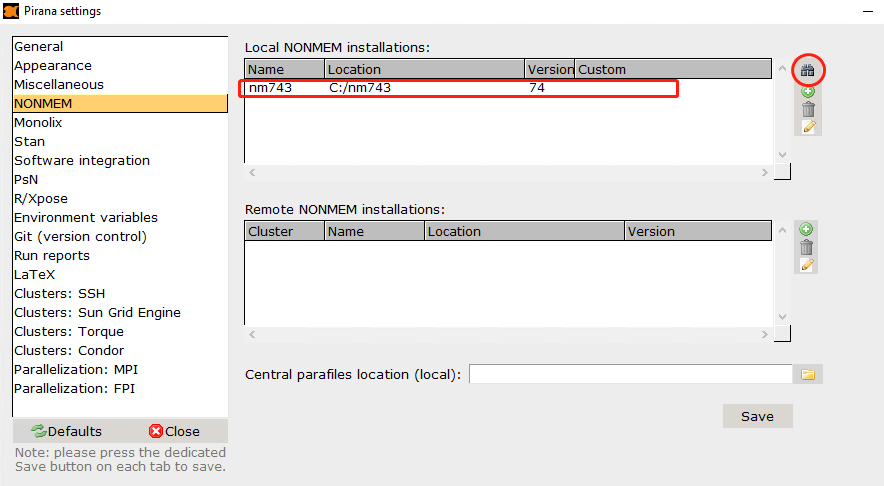

PIRANA NONMEM SLURM TIME CODE

The above will submit the R code (mycode.R) requesting one task (–n 1) on a single node (–N 1) on the general partition (–p general) with a one day run time limit (–t 1–), and 5 GB memory for the job (––mem=5g). It will use one task (–n 1), on one node (–N 1) in the interact partition (–p interact), and have a 4 GB memory limit (––mem=4g). The above will run the Matlab GUI on Longleaf and display it to your local machine. Srun -p interact -N 1 -n 1 -mem=4g -x11=first matlab -desktop -singleCompThread Running the Matlab GUI: module add matlab Sbatch -p general -N 1 -n 12 -mem=10g -t 02-00:00:00 -wrap="matlab -nodesktop -nosplash -singleCompThread -r mycode -logfile mycode.out"Ĭ. Send email to to request that your account be added to the snp partition and then submit those jobs to the snp partition. See Longleaf Technical Specifications to see how many cores each node in the general partition currently has. Note: Because the default is one cpu per task, -n 17 can be thought of as requesting 17 cpus. The above will submit the Matlab code (mycode.m) requesting 17 tasks (–n 17) on a single node (–N 1) on the general partition (–p general) with a 2 day run time limit (–t 02–00:00:00), and 10 GB memory (––mem=10g).

Multi cpu job submission script: #!/bin/bash Sbatch -p general -N 1 -n 1 -mem=2g -t 05-00:00:00 -wrap="matlab -nodesktop -nosplash -singleCompThread -r mycode -logfile mycode.out"ī. The equivalent command-line method: module add matlab The above will submit the Matlab code (mycode.m) requesting one task (–n 1) on a single node (–N 1) on the general partition (–p general) with a 5 day run time limit (–t 05–00:00:00), and 2 GB memory for the job (––mem=2g). Matlab -nodesktop -nosplash -singleCompThread -r mycode -logfile mycode.out Single cpu job submission script: #!/bin/bash

You’ll need to specify SBATCH options as appropriate for your job and application.

PIRANA NONMEM SLURM TIME HOW TO

Increased efficiency of dynamic memory allocation to handle very large problems, eliminating the need to recompile the NONMEM® program for unusually large problemsĪdditional features, some new to NONMEM 7.These are just examples to give you an idea of how to submit jobs on Longleaf for some commonly used applications. Parallel computing of a single problem over multiple cores or computers, for estimation, covariance assessment, simulation, nonparametric analysis and posthoc parameter and weighted residual diagnostic evaluation, significantly reducing completion timeģ. Markov-Chain Monte Carlo Bayesian Analysis (BAYES, NUTS)Ģ.Stochastic Approximation Expectation-Maximization (SAEM).Importance Sampling Expectation-Maximization (IMP).First Order Conditional Estimation (FOCE).Population analysis methods available for handling a variety of PK/PD population analysis problems: The latest release of NONMEM ® includes these enhancements and more.ġ.